Neural Node 3234049173 Apex Prism

Neural Node 3234049173 Apex Prism integrates signals within a modular apex topology to accelerate learning and inference. The approach emphasizes parallelism, data locality, and targeted caching to optimize gradient propagation and asynchronous updates. It supports structured experimentation, metric-driven tuning, and robust ablation studies. Its focus on latency, throughput, and interpretability informs governance-aligned performance across deployment contexts, including edge inference. The implications for scalable, traceable systems invite careful consideration of how these elements interact in practice.

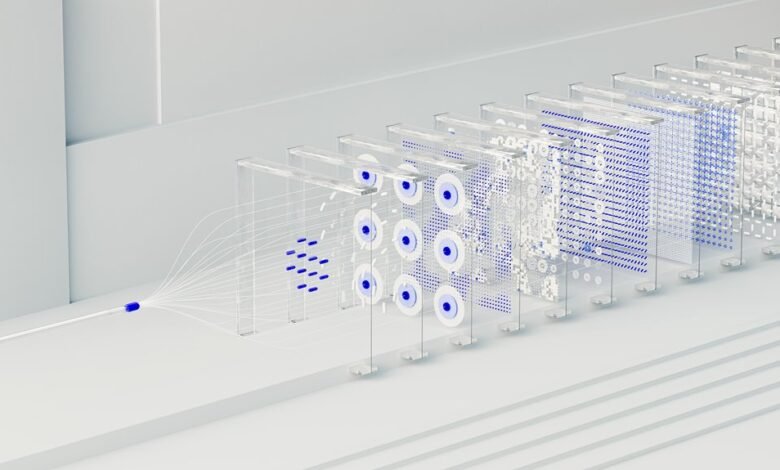

What Is Neural Node 3234049173 Apex Prism?

Neural Node 3234049173 Apex Prism refers to a representative component within a hypothetical neural architecture designed for advanced data processing. It enacts a discrete functional unit, integrating neural node signals into an apex prism topology. This configuration aims at learning acceleration and inference optimization, enabling systematic scalability while preserving modular clarity for researchers pursuing freedom through rigorous, analytical design.

How Apex Prism Accelerates Learning and Inference?

Apex Prism accelerates learning and inference by decomposing complex computations into modular, interlinked units that exploit parallelism and data locality.

The architecture enables scalable gradient propagation, targeted caching, and asynchronous updates.

How to optimize learning emerges from structured experimentation, ablation, and metric-driven tuning.

How to measure inference rests on latency, throughput, and calibration, ensuring consistent, interpretable performance across workloads without overfitting.

Deploying the Prism: Integration, Architecture, and Use Cases

Deploying Prism entails a structured alignment of integration, architecture, and use-case evaluation across deployed environments. The process emphasizes architecture integration, ensuring modular interfaces and interoperable components. Neural updates are synchronized with deployment cycles, while edge deployment considerations optimize local inference.

Use case mapping clarifies domain-specific requirements, guiding resource allocation, latency targets, and governance, yielding repeatable, auditable deployment patterns.

Evaluating Benefits: Convergence, Latency, and Interpretability

Evaluating benefits centers on three core metrics: convergence behavior, system latency, and interpretability. The analysis quantifies convergence tradeoffs across configurations, balancing stability with speed, while latency benchmarks reveal real-time responsiveness under varying load. Interpretability is assessed by traceability and explainability, ensuring decisions remain transparent. Systematic comparison enables informed governance and freedom to choose architectures aligned with strategic goals.

Conclusion

The analysis positions Neural Node 3234049173 Apex Prism as a modular, scalable unit that harmonizes parallel processing with structured data locality. Systematic evaluation indicates improved convergence, reduced latency, and clearer traceability across deployment scenarios. Like a finely tuned instrument in an orchestra, the Prism aligns components toward coherent performance targets while preserving interpretability. However, benefits depend on disciplined ablation studies, metric-driven tuning, and governance-aligned monitoring to sustain robustness under real-world variability.